Emojify

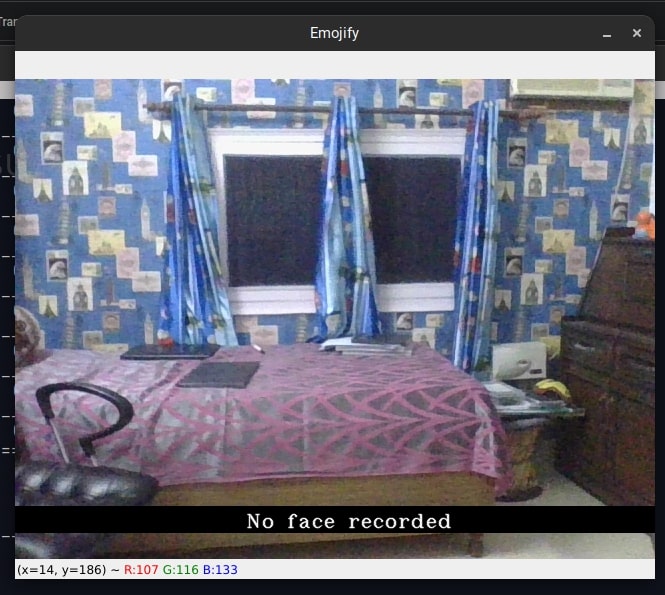

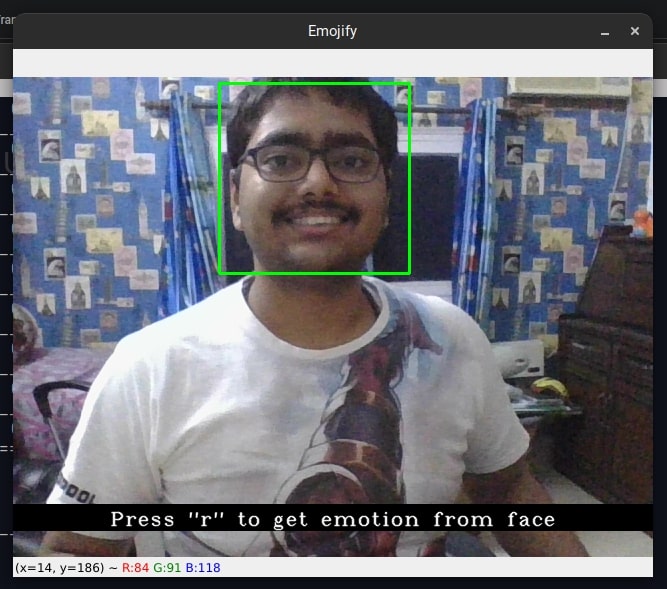

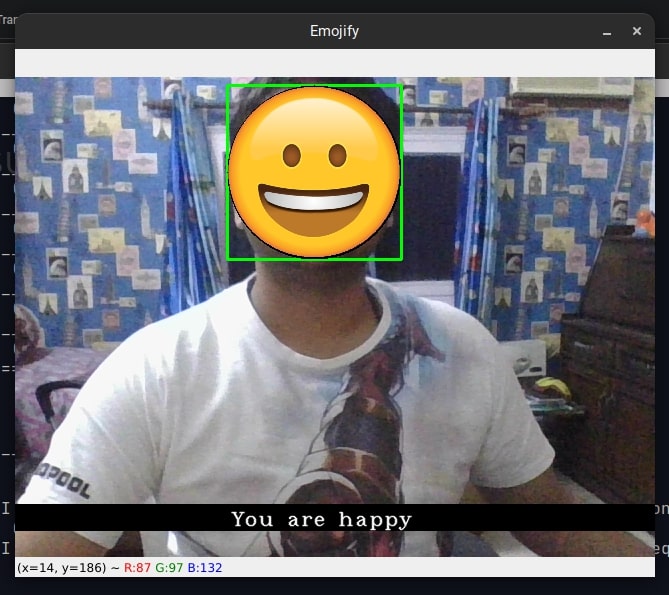

Emotion Detector using CNNs With advancements in computer vision and deep learning, it is now possible to detect human emotions from a training set of images. The goal of this project is to classify human expressions into 7 different classes such as angry, fear, happy, sad etc. and make emojis out of these expressions. In this project, human facial expressions are classified using deep learning (CNN) and filter and map corresponding emojis or avatars using OpenCV. You can find the code on github Facial emotion detection is an emerging field which is used in many applications such as social robots, to find customer satisfaction, gaming, criminal investigations etc. Non-verbal communication methods like facial emotion expressions, eye movement and hand gestures are used in many applications of human computer interaction. This facial emotion recognition system mainly identifies seven basic facial emotions such as anger, disgust, fear, happy, neutral, sad, and surprise. Facial emotion recognition techniques are based on either appearance features or geometric features. Facial emotion detection is not an easy task because there is no perfect distinction between emotions and there is a lot of complexity and variability. The objectives of this project is mentioned as follows: The images are trained and tested on the FER2013 dataset comprising of 7 classes of human emotions namely angry, disgust, fear, happy, neutral, sad, surprise with around 28K training images and 7K testing images. The FER2013 dataset (facial expression recognition) consists of 48x48 pixel grayscale face images which are centered and occupy equal space for better classification. A Convolutional Neural Network is built that consists of 4 2D Conv layers, 2 flattening layers, Max pool, dropout, batch normalization etc. Relu activation function is used in the 2D convolutional layers and hidden layers and softmax activation in the final output layer The implemented algorithm is: We have used OpenCV to develop the UI of our project. The interface is pretty simple. Directions on how to use the program is as follows: For detecting the faces, we have used a Haar Cascade. Haar Cascade is one of the fastest object detection algorithms. Boosting algorithms are used to produce a strong prediction out of a combination of “weak” learners, which are then applied on various subsections of the input image. They are widely used in today’s facial recognition software. With the help of Hyperparameter tuning, we set the learning rate = 0.0001 and using categorical cross entropy as the loss function we trained our model on 50 epochs with Adam optimizer. We got a training accuracy of 82% and a test accuracy of 65%. This project was made by Shubham Ojha (me), Saksham Agarwal and Rushikesh Metkar.Motivation

Objectives

Algorithm implemented

User Interface

Results

Acknowledgements